Voice Dictation for ML Engineers

Document models, track experiments, ship to production faster

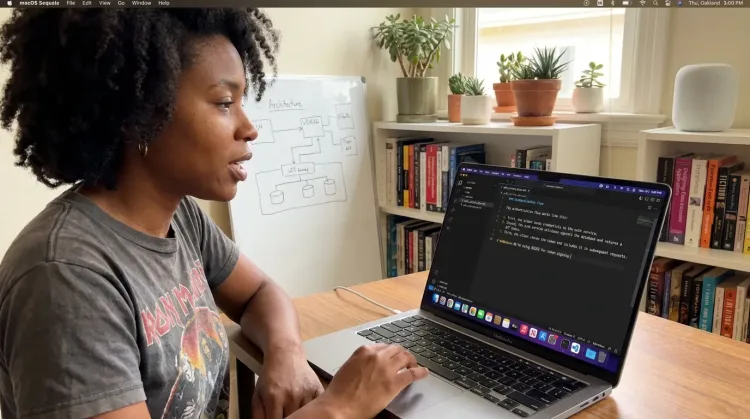

Why Ottex works for ml engineers

Concrete benefits with real-world impact

3x Faster Than Typing

Speak 150+ words per minute vs 40 wpm typing. Finish tasks in a third of the time.

Better Context, Better Results

Speaking naturally gives AI agents more context than typing. Describe your intent, explain edge cases, mention file names—your voice carries nuance that typing shortcuts.

Technical Term Accuracy

Cloud AI models excel at technical jargon, code terminology, and specialized vocabulary.

Custom Dictionary

Add names, technical terms, and jargon. Ottex transcribes them accurately every time.

Inline AI Commands

Transform text as you speak: 'Ottex start fix grammar Ottex end'. Edit, improve, translate—all by voice.

Precision Typing

Say 'new paragraph', 'open quote', 'em dash', 'ellipsis'. Full formatting control by voice.

Works with AI Chatbots

Dictate prompts to ChatGPT, Claude, Perplexity—anywhere you type. Better prompts, better answers.

Voice Corrections

Say 'scratch that' to delete mistakes, 'actually' to rephrase. Fix errors without stopping your flow or touching the keyboard.

What you can do

Write Documentation

Dictate technical documentation, READMEs, or API docs. Speaking lets you explain context, edge cases, and examples naturally—better docs with less effort.

Works with

Prompt ChatGPT & Claude

Dictate prompts to ChatGPT, Claude, Perplexity, or any AI chatbot. Speaking naturally lets you give more context—better context means better results.

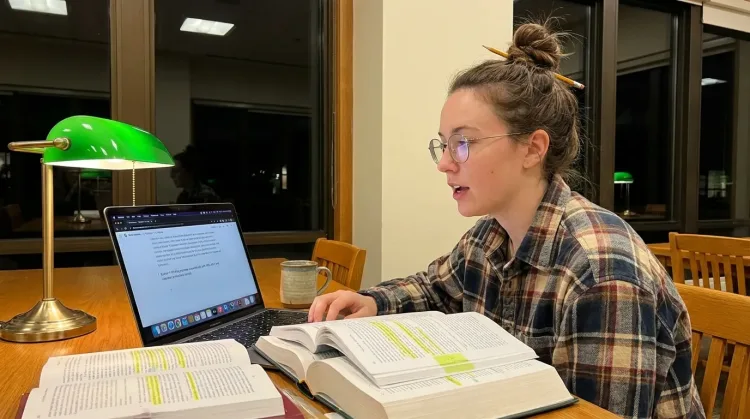

Write Research Notes

Dictate research notes, literature summaries, or analysis. Capture your thinking process without losing momentum to typing.

Works with

Other ways Ottex helps ml engineers

Capture Ideas Quickly

Speak ideas the moment they hit you. Use Ottex anywhere to capture thoughts, then refine them later.

Works with

Reply to Work Messages

Reply to Slack messages 3x faster. Dictate casual updates, detailed explanations, or quick acknowledgments without switching context.

Create Tickets & Tasks

Dictate Jira tickets, Linear issues, or Asana tasks. Speak the context, acceptance criteria, and details—then move on.

Write Meeting Notes

Dictate meeting notes in real-time or right after. Capture action items, decisions, and key points while they're fresh in your mind.

Works with

Write Status Updates

Dictate standup updates, weekly reports, or project status. Speak what happened and what's next—done.

Works with

Frequently asked questions

Does it handle ML and deep learning terminology?+

Yes. Cloud models like Gemini 3 Flash are trained on ML papers, PyTorch docs, TensorFlow guides, and thousands of GitHub repos. They handle 'transformer architecture,' 'gradient checkpointing,' 'mixed precision training,' 'distributed data parallel,' and other technical terms accurately. For project-specific terms (model names, internal pipelines), add them to your custom dictionary.

Can I dictate into Jupyter notebooks in VS Code?+

Yes. Ottex works anywhere you can type on macOS, including Jupyter notebooks in VS Code, JupyterLab in the browser, and Google Colab. Dictate markdown cells for experiment notes, code comments, and architecture documentation.

How does it help with debugging training issues?+

AI assistants like ChatGPT are invaluable for debugging training problems. But explaining your model architecture, loss behavior, and what you've already tried takes time to type. Speaking lets you explain the full context in 60 seconds: your model, the data, the symptoms, what you've tried. Better context = better debugging suggestions.

Will it slow down my machine during model training?+

No. Ottex uses less than 2% CPU because it's native Swift, not Electron. Cloud transcription happens via API. When you're training models on GPU or running distributed jobs, Ottex won't compete for compute resources.

Can I use it for MLOps documentation?+

Absolutely. Deployment runbooks, model cards, monitoring playbooks, incident postmortems--all benefit from voice dictation. You can explain complex pipelines naturally, including edge cases and failure modes you'd skip while typing. Work modes let you format differently for Notion docs vs Slack updates.

How much does it cost?+

Free forever with local models (Whisper runs on your Mac). Or bring your own API key for cloud models--most ML engineers spend ~$1-3/mo. If you want zero setup, the $9/mo premium tier includes Gemini 3 Flash with 99.1% accuracy.

Start documenting models faster

Download free. Use local models immediately, or add your API key for cloud quality. Start dictating experiment notes and deployment docs in minutes.

Try for freeNo credit card required.